Automating the Conversion of Natural Language Fiction to Multi-Modal 3D Animated Virtual EnvironmentsKevin GlassAugust, 2008Department of Computer Science, Rhodes University |

|||

|

|

|||

Welcome to the accompanying project webpage for the doctoral thesis. This page hosts the multi-media content referenced within the thesis, as well as some links to related web-sites and published papers on this topic. Dissertation: |

|||

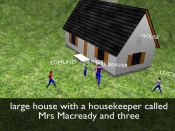

Abstract: Popular fiction books describe rich visual environments that contain characters, objects, and behaviour. This research develops automated processes for converting text sourced from fiction books into animated virtual environments and multi-modal films. This involves the analysis of unrestricted natural language fiction to identify appropriate visual descriptions, and the interpretation of the identified descriptions for constructing animated 3D virtual environments.  The goal of the text analysis stage is the creation of annotated fiction text, which identifies visual descriptions in a structured manner. A hierarchical rule-based learning system is created that induces patterns from example annotations provided by a human, and uses these for the creation of additional annotations. Patterns are expressed as tree structures that abstract the input text on different levels according to structural (token, sentence) and syntactic (parts-of-speech, syntactic function) categories. Patterns are generalized using pair-wise merging, where dissimilar sub-trees are replaced with wild-cards. The result is a small set of generalized patterns that are able to create correct annotations. A set of generalized patterns represents a model of an annotator's mental process regarding a particular annotation category. The goal of the text analysis stage is the creation of annotated fiction text, which identifies visual descriptions in a structured manner. A hierarchical rule-based learning system is created that induces patterns from example annotations provided by a human, and uses these for the creation of additional annotations. Patterns are expressed as tree structures that abstract the input text on different levels according to structural (token, sentence) and syntactic (parts-of-speech, syntactic function) categories. Patterns are generalized using pair-wise merging, where dissimilar sub-trees are replaced with wild-cards. The result is a small set of generalized patterns that are able to create correct annotations. A set of generalized patterns represents a model of an annotator's mental process regarding a particular annotation category.  Annotated text is interpreted automatically for constructing detailed scene descriptions. This includes identifying which scenes to visualize, and identifying the contents and behaviour in each scene. Entity behaviour in a 3D virtual environment is formulated using time-based constraints that are automatically derived from annotations. Constraints are expressed as non-linear symbolic functions that restrict the trajectories of a pair of entities over a continuous interval of time. Solutions to these constraints specify precise behaviour. We create an innovative quantified constraint optimizer for locating sound solutions, which uses interval arithmetic for treating time and space as contiguous quantities. This optimization method uses a technique of constraint relaxation and tightening that allows solution approximations to be located where constraint systems are inconsistent (an ability not previously explored in interval-based quantified constraint solving). Annotated text is interpreted automatically for constructing detailed scene descriptions. This includes identifying which scenes to visualize, and identifying the contents and behaviour in each scene. Entity behaviour in a 3D virtual environment is formulated using time-based constraints that are automatically derived from annotations. Constraints are expressed as non-linear symbolic functions that restrict the trajectories of a pair of entities over a continuous interval of time. Solutions to these constraints specify precise behaviour. We create an innovative quantified constraint optimizer for locating sound solutions, which uses interval arithmetic for treating time and space as contiguous quantities. This optimization method uses a technique of constraint relaxation and tightening that allows solution approximations to be located where constraint systems are inconsistent (an ability not previously explored in interval-based quantified constraint solving). 3D virtual environments are populated by automatically selecting geometric models or procedural geometry-creation methods from a library. 3D models are animated according to trajectories derived from constraint solutions. The final animated film is sequenced using a range of modalities including animated 3D graphics, textual subtitles, audio narrations, and foleys. Hierarchical rule-based learning is evaluated over a range of annotation categories. Models are induced for different categories of annotation without modifying the core learning algorithms, and these models are shown to be applicable to different types of books. Models are induced automatically with accuracies ranging between 51.4% and 90.4%, depending on the category. We show that models are refined if further examples are provided, and this supports a boot-strapping process for training the learning mechanism.  The task of interpreting annotated fiction text and populating 3D virtual environments is successfully automated using our described techniques. Detailed scene descriptions are created accurately, where between 83% and 96% of the automatically generated descriptions require no manual modification (depending on the type of description). The interval-based quantified constraint optimizer fully automates the behaviour specification process. Sample animated multi-modal 3D films are created using extracts from fiction books that are unrestricted in terms of complexity or subject matter (unlike existing text-to-graphics systems). These examples demonstrate that: behaviour is visualized that corresponds to the descriptions in the original text; appropriate geometry is selected (or created) for visualizing entities in each scene; sequences of scenes are created for a film-like presentation of the story; and that multiple modalities are combined to create a coherent multi-modal representation of the fiction text. The task of interpreting annotated fiction text and populating 3D virtual environments is successfully automated using our described techniques. Detailed scene descriptions are created accurately, where between 83% and 96% of the automatically generated descriptions require no manual modification (depending on the type of description). The interval-based quantified constraint optimizer fully automates the behaviour specification process. Sample animated multi-modal 3D films are created using extracts from fiction books that are unrestricted in terms of complexity or subject matter (unlike existing text-to-graphics systems). These examples demonstrate that: behaviour is visualized that corresponds to the descriptions in the original text; appropriate geometry is selected (or created) for visualizing entities in each scene; sequences of scenes are created for a film-like presentation of the story; and that multiple modalities are combined to create a coherent multi-modal representation of the fiction text.  This research demonstrates that visual descriptions in fiction text can be automatically identified, and that these descriptions can be converted into corresponding animated virtual environments. Unlike existing text-to-graphics systems, we describe techniques that function over unrestricted natural language text and perform the conversion process without the need for manually constructed repositories of world knowledge. This enables the rapid production of animated 3D virtual environments, allowing the human designer to focus on creative aspects. This research demonstrates that visual descriptions in fiction text can be automatically identified, and that these descriptions can be converted into corresponding animated virtual environments. Unlike existing text-to-graphics systems, we describe techniques that function over unrestricted natural language text and perform the conversion process without the need for manually constructed repositories of world knowledge. This enables the rapid production of animated 3D virtual environments, allowing the human designer to focus on creative aspects. |

|||